Motivation

High-resolution imaging and mapping of the ocean and its floor has been limited to less than 5% of the global waters due to technological barriers. Whereas sonar is the primary contributor to existing underwater imagery, the water-based system is limited in spatial coverage due to its low imaging throughput. On the other hand, aerial synthetic aperture radar systems have provided high-resolution imaging of the entire earth’s landscapes but are incapable of deep penetration into water.

How can we get the best of both worlds?

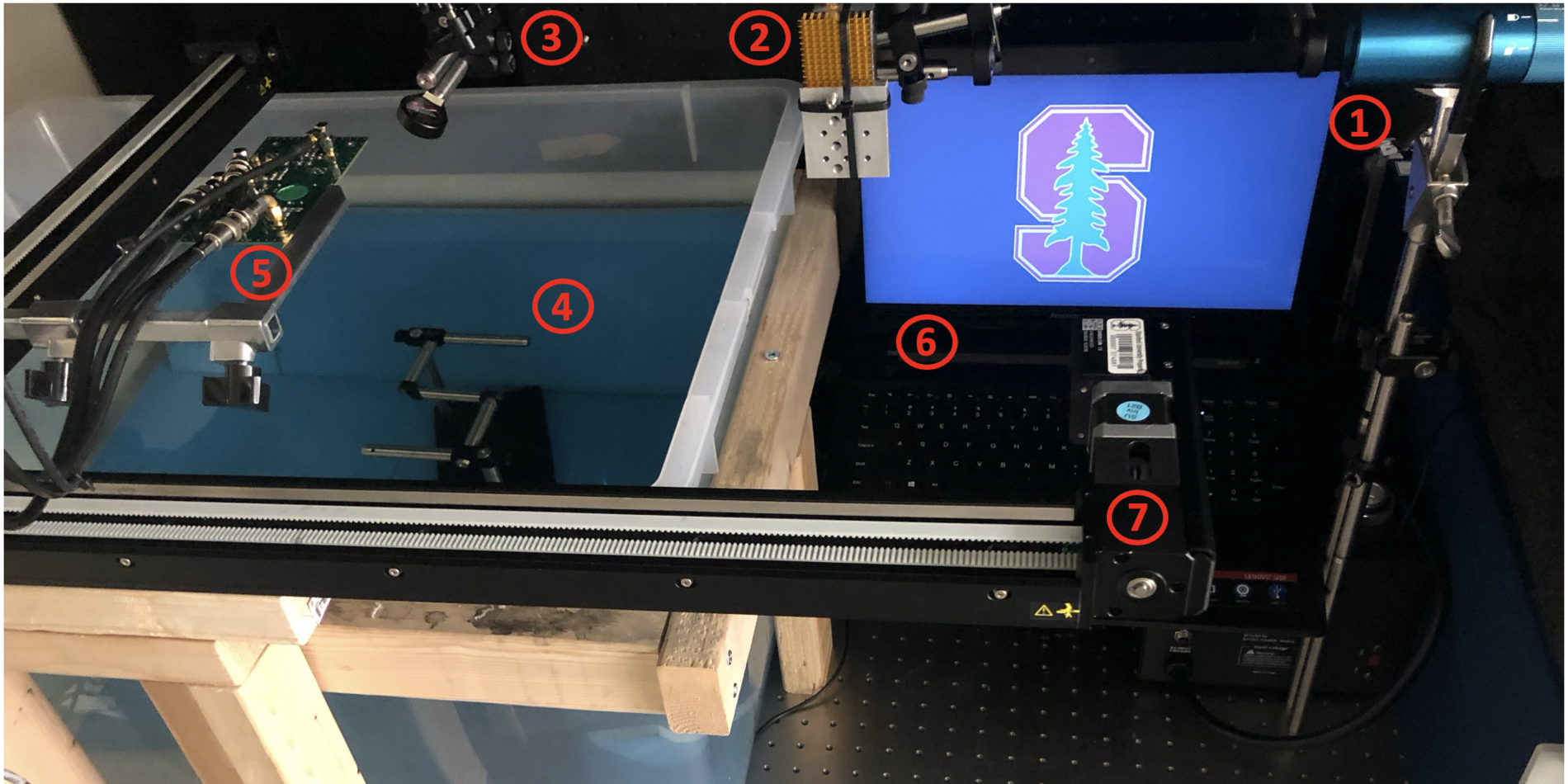

Experimental Setup

1) Laser fires a burst of infrared light

2) Light is intensity modulated with the desired acoustic frequency

3) A mirror deflects the light down toward the water surface

4) Light is absorbed by the water and sound waves are created that reflect off underwater object

5) Sound is received in air and converted to an electrical signal by a high-sensitivity sensor

6) Signals are digitized and then stored in a local computer

7) Linear stage moves the sensor to mimic measurement with an array of sensors

3-D Reconstructed Image

After the signals are collected, we run an image reconstruction algorithm to convert the signals to a perceptible image:

Project News

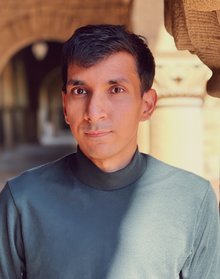

Project Leads

AJAY SINGHVI received the B.E. degree in electrical and electronics engineering from Birla Institute of Technology and Science (BITS) Pilani, India, in 2015. He is currently pursuing the joint M.S. and Ph.D. degree in electrical engineering from Stanford University.

His current research interests include design of integrated circuit systems and algorithms for non-contact thermoacoustic/photoacoustic and ultrasound imaging, sensing, and communication applications.

Project Sponsors